Color looks right on your screen. Your supplier confirms the batch passed inspection. But when the physical SPC sample reaches the buyer, the color suddenly “doesn’t match.”

This type of SPC color approval dispute is common in flooring supply chains. It often leads to resampling rounds, shipment delays, and strained supplier relationships. SPC color control becomes especially important in manufacturing environments where rigid vinyl flooring materials must maintain consistent decorative layers across production batches.

The problem usually isn’t about who is right or wrong. It’s that different teams evaluate the same color under different technical conditions — lighting environments, display calibration, measurement formulas, and material surfaces.

This guide explains why buyers and suppliers disagree during SPC color approval, and identifies the most common technical factors behind those disagreements. More importantly, it outlines practical steps manufacturers and buyers can take to prevent color disputes before samples enter the approval process.

The Delta E numbers tell the real story:

| Delta E | What the Buyer Sees |

|---|---|

| 0–1.0 | Nothing — ideal |

| 1.0–2.0 | Hard to see — acceptable |

| 2.0–3.5 | Noticeable — flagged for review |

| 3.5–5.0 | Wrong — correction required |

| >5.0 | Rework. No debate. |

Most disputes land in that 2.0–3.5 range — the gray zone where one side says “close enough” and the other says “no way.”

What SPC Color Approval Actually Measures

Here’s what nobody spells out: SPC color approval doesn’t measure what the color looks like. It measures the difference.

A spectrophotometer compares your production sample against a color standard. It outputs one number — Delta E (ΔE). That number is objective. It ignores your lighting, your monitor, and your opinion.

But both sides misread what that number means.

Buyers assume a “pass” means the color is an exact visual match. It’s not. A ΔE of 1.8 passes the test. It can still look off under a showroom’s warm LEDs compared to the lab’s D65 light source. The instrument approved it. The client’s eyes reject it. Both reactions are valid.

Suppliers assume “within tolerance” means the process is stable. It doesn’t. A batch can score ΔE 1.9 across 20 samples — all within spec. At the same time, control charts flag an out-of-control process. It passes today. It fails tomorrow — with no warning.

That gap is where disputes start:

The spectrophotometer captures one controlled moment

Your buyer sees real-world variables — angle, gloss, texture, ambient light

“In spec” ≠ visually identical ≠ process stable

A passing number tells you the measurement cleared the threshold. It doesn’t promise the color looks right in every environment. It doesn’t confirm your process will repeat the same result next batch. Treat it as a checkpoint — not a green light.

Reason #1: Lighting Differences Create the Metamerism Effect

Lighting variation also affects how decorative surfaces appear in finished flooring installations, particularly in large commercial interior flooring projects. The sample passed. The spectrophotometer confirmed it. Then the client walked it over to their showroom floor — and the color was wrong. Dead wrong.

That’s metamerism. Not a defect. Just physics.

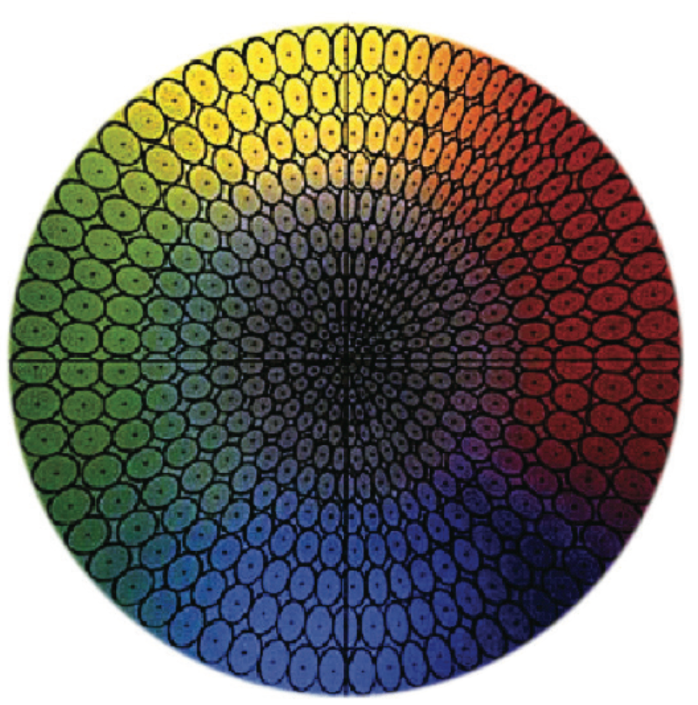

Two colors can look the same under one light source and clash under another. The spectral reflectance curves cross. Your eyes agree — but only in that one controlled moment. Switch the light, and the whole agreement breaks down. International color evaluation standards define controlled lighting conditions used in laboratory color comparison.

Here’s what that gap looks like between your lab and your buyer’s space:

Your lab: D65 daylight simulator, ~6500K, ISO 3664/ASTM D1729 controlled booth

Their office: Natural window light shifting from 25,000K (overcast) down to 4,000K (late afternoon)

Their showroom: Warm LED or incandescent, CCT 2700–4000K

Their phone screen: ~5000–7000K, with varying white balance

Same tile. Four light sources. Four different color readings.

Why “Passed Under D65” Doesn’t Protect You

This failure pattern shows up in textiles and coatings all the time:

A sample matches the standard under lab D65. ΔE clears the threshold. SPC color approval goes through. Then it ships. The client views it under fluorescent or warm LED. The match falls apart. They reject it — and they’re right to.

The CIE Metamerism Index measures this risk directly. It tracks the average color difference across 8 metameric pairs under alternate illuminants. A low MI score under D65 tells you nothing about stability under your client’s lighting. It never did.

The fix isn’t arguing about the Delta E score. Test the sample under multiple illuminants — D65, A (incandescent), TL84 (fluorescent) — before it leaves your facility. Check whether the spectral reflectance curves cross three or more times. Three crossings means you have a metameric pair. That sample will read differently depending on where your buyer stands.

Ship it without that check, and you’re sending a dispute along with the tile.

Reason #2: Uncalibrated Screens Distort Digital Color References

Your supplier sends a digital color reference. You open it on your laptop. It looks perfect. Your buyer opens the same file on their office monitor — and flags the color as too warm.

Nobody changed the file. The monitor did it.

This is the uncalibrated display problem. It’s silent. It’s invisible. And it wrecks remote SPC color approval before anyone says a word.

Here’s what’s going on:

A factory monitor running an uncalibrated sRGB profile renders color in a different way than a buyer’s wide-gamut P3 display

Adobe RGB files viewed on a standard sRGB screen get color-crushed — saturation collapses, hues shift

Laptops, phones, and budget office monitors apply their own white point adjustments with no warning — and no notification to anyone

The result: two people looking at one file, seeing two different colors. Both confident. Both wrong about the other.

The Fix Is a Calibration Tool — Not a Conversation

X-Rite i1Display and Datacolor Spyder are hardware calibrators used across the industry. They cost a fraction of one rejected sample round. They take fifteen minutes to run. Each one brings your display in line with a known reference standard.

Skip calibration, and digital approval becomes guesswork dressed up as process.

Your team signs off on color from an uncalibrated screen. That means you’re not approving color — you’re approving your monitor’s version of it.

Reason #3: Delta E Tolerance Is Interpreted Differently

Same sample. Same spectrophotometer reading. Two different approval decisions.

That’s the tolerance trap — and it drives 72% of supplier reliability disputes in small and mid-sized businesses. Not bad materials. Not sloppy production. Just a mismatch in how each side defines “acceptable.”

Here’s the core problem: ΔE tolerance thresholds aren’t universal. A flooring manufacturer accepts ΔE ≤ 2.0 as a clean pass. A fashion buyer rejects anything above ΔE 1.0 the moment the sample hits retail lighting. Both positions are valid. Neither side told the other.

The Formula Problem Nobody Talks About

It gets worse. Both sides may agree on a threshold number — but still run different formulas to calculate it:

| Formula | What It Prioritizes | Real-World Result |

|---|---|---|

| CMC | Lightness and chroma sensitivity | ΔE_CMC 1.2 = pass |

| CIE94 | Balanced, textile-weighted | ΔE_94 1.5 = pass |

| CIEDE2000 | Closest to human vision | ΔE_2000 1.8 = fail |

One sample. The supplier runs CMC — it passes. The buyer runs CIEDE2000 — it fails. Neither side made up their result. They just used different rulers.

Industry norms differ just as sharply:

– Textiles: ΔE ≤ 1.0

– Plastics/SPC: ΔE ≤ 1.5

– Packaging print: ΔE ≤ 2.0

What a Vague Contract Costs You

Contract language like “color to be approved” — no formula, no threshold, no viewing condition — carries a 100% dispute risk. The supplier measures ΔE 1.8 and ships with confidence. The buyer sees a mismatch and rejects it with equal confidence.

Fix this before the sample ships. Lock the formula. Lock the threshold. Lock the viewing condition. Get it in writing.

Reason #4: Approved Reference Samples Drift Over Time

Physical color standards degrade. That’s not a theory — it’s a measurement problem hiding in plain sight.

The sample your buyer approved six months ago has been sitting in a drawer, under fluorescent light, in a warehouse with fluctuating humidity. It faded. It shifted. And no one updated the reference point.

So now your production matches the original SPC color approval standard. But that standard no longer matches what the buyer remembers approving.

The Standard Drifts — And Nobody Tracks It

Here’s how this plays out:

Pigment fading changes the standard’s spectrophotometer reading over time

Surface oxidation on vinyl and PVC samples shifts the L* value — sometimes by more than ΔE 1.5

Handling and contamination add new variables each time someone pulls the sample for comparison

The buyer is rejecting your production batch against a ghost reference — a sample that no longer represents the original approval decision.

What Good Practice Looks Like

Standard samples need expiration logic. ISO/IEC 17025-compliant labs track reference material drift through recalibration intervals and trend monitoring. They flag any standard that has moved beyond its original certified value.

Apply that same discipline to your physical color standards:

Re-measure every standard sample at defined intervals — every quarter, at minimum

Log the ΔE drift between current readings and the original certification measurement

Replace any standard showing cumulative drift beyond ΔE 0.5 from baseline

A standard that drifts without being retired corrupts every approval decision built on it. The dispute isn’t about your production quality. It’s about the ruler changing shape between measurements.

Reason #5: Substrate and Surface Texture Affect Color Perception

Same pigment formula. Two different substrates. Two different colors — measured, not imagined. Surface texture differences are common in decorative flooring products where embossed or matte finishes are applied to laminate flooring panels.

Surface texture isn’t a cosmetic variable. It’s a color variable. Research backs this up: fabrics with a surface roughness of Ra = 0.46 mm produce an average ΔE_CMC(2:1) of 2.86 under illuminant A alone. That’s not a borderline number. It clears the “correction required” threshold before production even starts.

Here’s what’s happening: matte surfaces scatter light in multiple directions. That scattering drives color perception. High-gloss surfaces bounce specular light back as white, which kills chroma. Same tile. Same colorant. The gloss level changes what your eye reads as the actual color.

Hue makes it worse. Orangish tones (h° = 0–90°) show the largest lightness and chroma shifts under different illuminants. Bluish-reds (h° = 270–360°) hold much steadier. Your SPC color approval process treats all hues the same across substrates? You’re measuring some samples with the wrong ruler.

Cross-substrate calibration errors pile on top of that. Calibrate a scanner on glossy photo media, then evaluate matte copy paper — mean ΔE jumps by 2–6x compared to matched-substrate calibration. The instrument isn’t broken. The substrate pairing is.

Lock Substrate Variables Before Approval, Not After

Your color approval documentation needs to specify:

Surface roughness (Ra in μm/mm) — matte, embossed, napped

Gloss level (GU rating) and any soft-touch coatings

Measurement geometry — specular included or excluded

Per-substrate tolerance: ΔE_CMC(2:1) ≤ 2.0, matched to each substrate pair

One approval standard across mixed substrates isn’t a process. It’s a dispute waiting to ship.

Reason #6: Communication Breakdowns in the Approval Workflow

The color is fine. The process is broken.

86% of employees blame poor communication for workplace failures. That number costs organizations $1.2 trillion each year — and a real portion of that damage lives inside approval workflows no one ever audited.

Here’s the failure pattern inside most SPC color approval cycles:

Feedback arrives vague: “the color looks off” — no ΔE reference, no lighting condition, no comparison standard

Approval requests get buried across email threads, Slack channels, and project apps — all at once, all in different places

63% of employees lose real work time sorting out communication problems — time that stretches your approval cycle, and no one measures it

The Perception Gap Nobody Admits

72% of managers think their feedback is on time. Only 48% of their teams agree.

That gap is not a personality conflict. It’s a structural failure. Add a supplier-buyer relationship into the mix — one side in Guangzhou, the other reviewing samples in a Chicago showroom — and the delay builds fast.

Just 15% of organizations follow through on feedback with clear next steps. Everyone else sends a comment and assumes it landed. It didn’t. Or it landed without context. So your supplier resamples the wrong thing — twice.

Fix the channel before you fix the color. Lock one approval thread. Require structured feedback: formula used, ΔE result, viewing condition, and specific rejection reason. Every round, every time.

How to Build a Reliable SPC Color Approval Protocol

For buyers sourcing SPC flooring internationally, color approval disputes usually appear during the sampling stage before bulk production begins.

Six reasons for failure. One way to stop them: a protocol that removes ambiguity before the first sample ships.

Here’s what that looks like in practice.

Lock the Rules Before Production Starts

Pre-production alignment isn’t a courtesy step. It’s the document that makes every downstream decision defensible.

Put four things in writing before anything goes into production:

Reference standard — physical swatch or exact CIE L*a*b* values (e.g., L* 50.0, a* 20.0, b* -5.0)

ΔE formula — CIEDE2000 is the closest to human vision. Lock it in the contract, by name.

Tolerance thresholds — ΔE00 ≤ 0.8 passes. 0.8–1.5 triggers conditional review. Anything above 1.5 gets rejected.

Lighting condition — D65/10° observer. Documented. Non-negotiable.

No formula in the contract means both sides bring their own ruler. You already know how that ends.

Run SPC Measurement as a Process Control Tool — Not a Checkbox

Submit 5–10 lab samples. Use a calibrated spectrophotometer — X-Rite eXact or Konica Minolta CS-200. Calibrate it every day. Log every ΔE value against the standard.

From there, move to production monitoring. Run Xbar/R control charts across 20+ samples. Set control limits at ±3σ. If 95% of samples don’t land within ΔE ≤ 1.0 — or more than one outlier appears per 20 samples — production stops. Not pauses. Stops.

Teams that run this process without cutting corners see real numbers: 37% drop in defect rate, 35% less scrap, 50% fewer customer complaints.

Make Every Approval Decision Traceable

Platforms like Pantone Connect and X-Rite InkFormulation do three things well. They generate timestamped approval PDFs. They share live ΔE comparisons. They export full 20-sample datasets. That’s not paperwork — that’s the evidence trail that ends disputes before they escalate.

Build an Escalation Path With Teeth

Readings diverge by more than ΔE 0.5 between buyer and supplier? Don’t argue. Re-measure shared samples together. Still no resolution? Bring in a third-party lab like Intertek. Thirty samples, one agreed instrument, cost split between both parties.

The outcome is clear. Third-party results exceed tolerance — the supplier reworks at 100%. Results fall within spec — the buyer accepts. No gray zone. No finger-pointing.

Set a time limit on approval validity too. Six months maximum. Also, trigger a reset any time a bulk dye lot changes by more than 5%. Color standards drift over time. Your contracts need to catch that drift before it becomes a dispute.

SPC Color Approval FAQ

What is Delta E in SPC color approval?

Delta E (ΔE) is a numerical value that measures the color difference between two samples using a spectrophotometer.

Instead of judging color visually, the instrument compares the measured color values against a reference standard in the CIE L*a*b* color space.

In SPC flooring production, Delta E helps determine whether the color difference between a production tile and the approved sample stays within the allowed tolerance range.

Typical industry ranges include:

| Delta E | Interpretation |

|---|---|

| 0–1.0 | Almost no visible difference |

| 1.0–2.0 | Slight difference, usually acceptable |

| 2.0–3.5 | Visible difference, often reviewed |

| >3.5 | Clear mismatch requiring correction |

Even when Delta E passes the tolerance limit, visual differences can still appear under different lighting environments.

Why does the same SPC sample look different under different lighting conditions?

This effect is known as metamerism.

Two colors may appear identical under one light source but different under another because their spectral reflectance curves interact differently with the lighting spectrum.

For example, an SPC flooring sample evaluated under laboratory lighting may match perfectly under a D65 daylight simulator. However, the same sample may look different under:

warm LED lighting in showrooms

fluorescent lighting in retail stores

natural sunlight entering through windows

Because of this, many manufacturers evaluate samples under multiple light sources such as D65, TL84, and incandescent lighting before approving color.

What Delta E tolerance is normally accepted for SPC flooring?

Tolerance levels vary by industry, but most SPC flooring manufacturers use a Delta E tolerance between 1.0 and 2.0.

Typical benchmarks include:

| Industry | Common Delta E Tolerance |

|---|---|

| Textiles | ≤1.0 |

| Plastics and SPC flooring | ≤1.5 |

| Packaging printing | ≤2.0 |

Some buyers require tighter tolerances for decorative flooring products, especially when the flooring is used in large open spaces where color variation becomes easier to notice.

For this reason, suppliers and buyers should always specify the Delta E formula and tolerance level in the contract before production begins.

How can manufacturers reduce SPC color approval disputes?

Most SPC color disputes can be avoided by standardizing the approval process.

Key steps include:

Define the reference standard clearly

Use either a certified physical sample or precise CIE L*a*b* color values.

Agree on the Delta E formula

Many manufacturers now use CIEDE2000 because it aligns closely with human color perception.

Specify the viewing condition

Standard lighting such as D65 daylight should be used for laboratory evaluation.

Calibrate instruments and monitors regularly

Spectrophotometers and display screens must be calibrated to ensure consistent measurement.

Document every approval step

Recording test results, lighting conditions, and tolerance thresholds reduces ambiguity during production.

A structured approval protocol helps ensure both buyers and suppliers evaluate color using the same standards.

Conclusion

Color approval issues often appear in large international flooring supply chains where multiple production batches must maintain consistent decorative film appearance. Color disagreements don’t start because buyers are difficult or suppliers are careless. They start because two parties are seeing different things — different light, different screens, different reference points, different ideas of what “acceptable” means.

That’s the real takeaway here.

Fix the environment. Align on the standard. Document every step of your SPC color approval process before a single sample ships. Don’t wait until a dispute lands in your inbox.

Here’s the truth most brands learn too late: by the time you’re arguing over whether a color “passes,” you’ve already lost time, money, and trust.

Stricter language in your vendor contracts won’t solve this. A smarter SPC color approval protocol will. One that removes the guesswork before it turns into conflict.

Start by auditing the six failure points outlined above. Find your weakest link. Close it.

Disputes don’t fix themselves — but a clear process will.